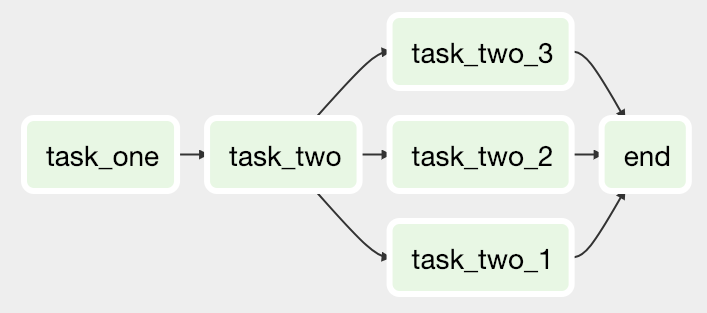

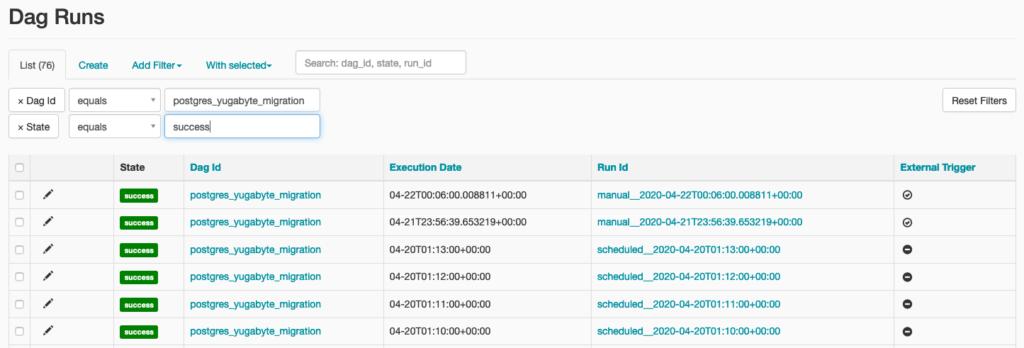

This allows for writing code that instantiates pipelines dynamically. Dynamic: Airflow pipelines are configuration as code (Python), allowing for dynamic pipeline generation.For high-volume, data-intensive tasks, a best practice is to delegate to external services specializing in that type of work.Īirflow is not a streaming solution, but it is often used to process real-time data, pulling data off streams in batches. Other similar projects include Luigi, Oozie and Azkaban.Īirflow is commonly used to process data, but has the opinion that tasks should ideally be idempotent (i.e., results of the task will be the same, and will not create duplicated data in a destination system), and should not pass large quantities of data from one task to the next (though tasks can pass metadata using Airflow's XCom feature). When the DAG structure is similar from one run to the next, it clarifies the unit of work and continuity. Can I use the Apache Airflow logo in my presentation?Īirflow works best with workflows that are mostly static and slowly changing.Base OS support for reference Airflow images.Support for Python and Kubernetes versions.The rich user interface makes it easy to visualize pipelines running in production, monitor progress, and troubleshoot issues when needed. Rich command line utilities make performing complex surgeries on DAGs a snap. The Airflow scheduler executes your tasks on an array of workers while following the specified dependencies. Use Airflow to author workflows as directed acyclic graphs (DAGs) of tasks. When workflows are defined as code, they become more maintainable, versionable, testable, and collaborative. It’s possible to see the output of the task:Īn alternative to the UI, when it comes to unpause and trigger and DAG, is straightforward.Apache Airflow (or simply Airflow) is a platform to programmatically author, schedule, and monitor workflows. Inside Graph View, click on task_2, and click Log. Heading over to the Graph View, we can see that both tasks ran successfully □:īut what about the printed output of task_2, which shows a randomly generated number? We can check that in the logs. Having triggered a new run, you’ll see that the DAG is running: Now we enable the DAG (1) and trigger it (2), so it can run right away:Ĭlick the DAG ID (in this case, called EXAMPLE_simple), and you’ll see the Tree View. In this example, I’ve run the DAG before (hence some columns already have values), but you should have a clean slate. Run via UI #įirst, you should see the DAG on the list: There are two options to unpause and trigger the DAG: we can use Airflow webserver’s UI or the terminal. Now we need to unpause the DAG and trigger it if we want to run it right away. Once the DAG definition file is created, and inside the airflow/dags folder, it should appear in the list. # Task_2 then uses the result from task_1. # As you can see, task_2 runs after task_1 is done. # This will determine the direction of the tasks.

# Print the random number to the logs print(f 'The randomly generated number is. # Generate a random number return def task_2(value): # In this case it's called `EXAMPLE_simple`. # The function name will be the ID of the DAG. =None, start_date =days_ago( 2), catchup =False) # It's possible to set the schedule_interval to None (without quotes). # Use the DAG decorator from Airflow # means the DAG will run everyday at midnight.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed